10 projects - #9

microcolor

training a neural network to colorize microscopy images

project goal

Most microscopy is either in black and white, or the color saturation of the image is very low. It's even impossible for electron microscopy to image a subject in color, as it only reads a grayscale intensity. Color versions of electron microscope images exist, color is added by hand. My goal was to find a way to automatically colorize microscope imagery.

methods

Neural networks have shown great promise in converting black and white images to color. In particular, the DeOldify network can produce outstanding results for typical photo imagery. I had used this network in the past to colorize microscope images. Occasionally I had success, but frequently the network assigned the image a drab greenish yellow or wouldn't colorize at all. I assume that this is because microscopy is far outside the trained domain of the network. This gave me hope that networks could do a good job at colorization if I had the right dataset.

An example of an image that DeOldify colorizes well.

An example of an image that DeOldify hardly colorizes at all.

For my training dataset, I used almost 2500 images from 45 years of prizewinning photos from the Nikon's Small World photo competition. Each of these images is typically beautifully colorized, or captures a vibrant microscopic scene. Each of these images could then be converted to grayscale in order to provide an input to correspond to the target color counterpart.

I attempted to retrain DeOldify, but the system is quite complex/finicky, and I was never able to get good results. Instead I trained a Pix2Pix image translation network. This network is GAN that takes an input and seeks to transform it a form that is indistinguishable from a target image. In my case, the black and white image would be transformed to color. The task of colorization is well within the network's capacity, and it started generating plausible results fairly rapidly (within 30 epochs).

results

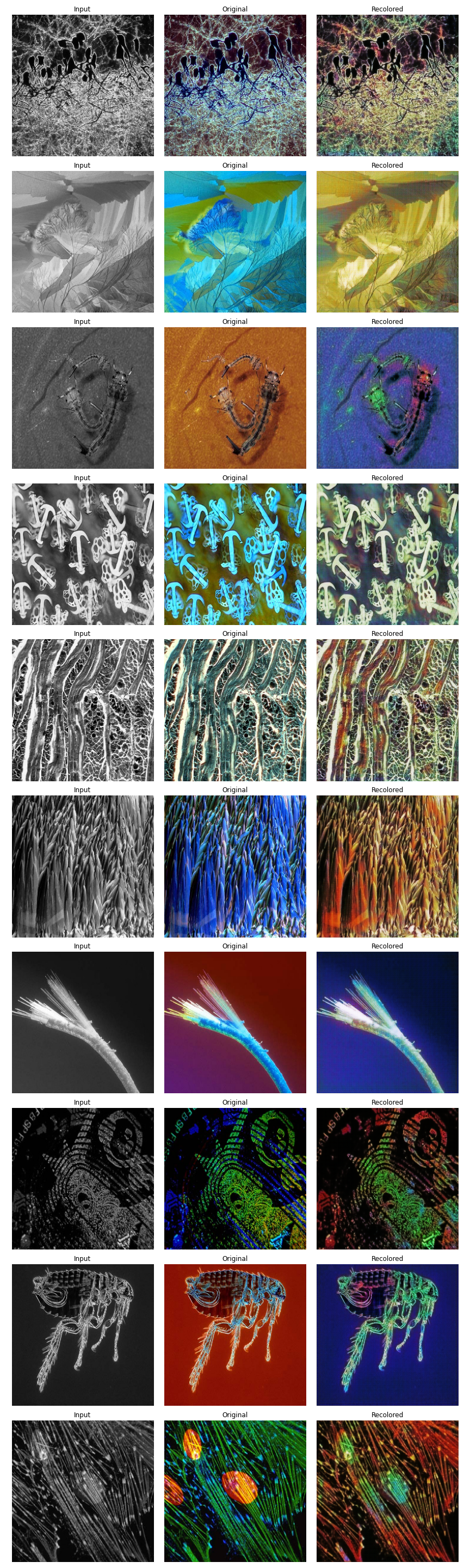

Perceptually, the network does an excellent job of colorization, even with minimal training.

Examples of image colorization. Input image on the right, original image in the center, network output on the right.

Examples of image colorization. Input image on the right, original image in the center, network output on the right.

Examples of image colorization. Input image on the right, original image in the center, network output on the right.

Examples of image colorization. Input image on the right, original image in the center, network output on the right.

conclusion

I'm quite pleased with the outputs, particularly for the short amount of training time. I find it especially fascinating when the network colors the image in a way that is different from the original, but perceptually more beautiful.

what would i do next

- Train the network for longer.

- The network currently only accepts square inputs. I'd like to implement a strategy to accept/output arbitrary input shapes.

- The network would almost certainly perform better if inputs/outputs were in Lab colorspace rather than RGB since the color channels are separate from luminance.

- Test whether the network could be trained to classify cell types by colorization.